NLP+CSS · 2024

Can LLMs (or humans) disentangle text?

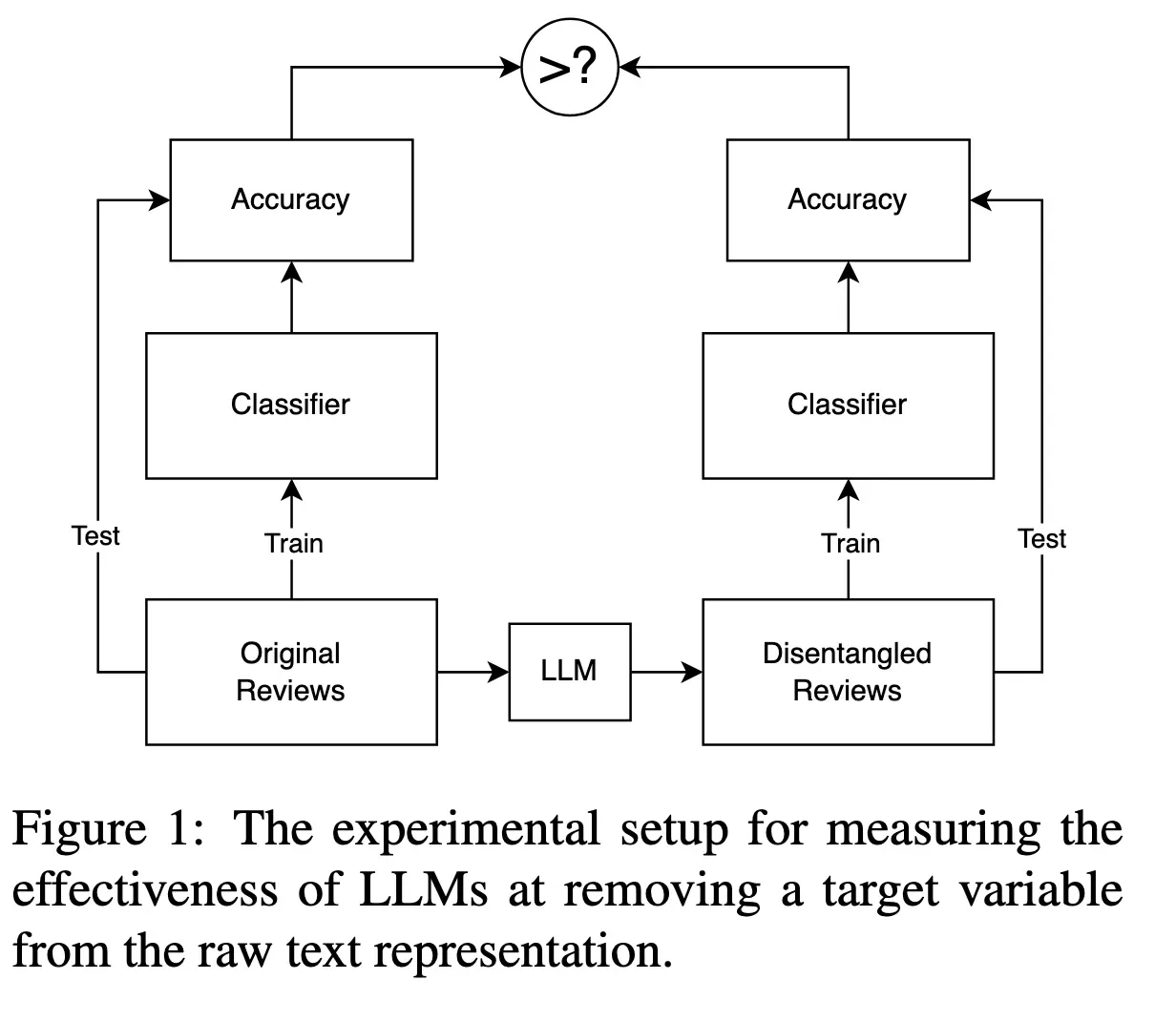

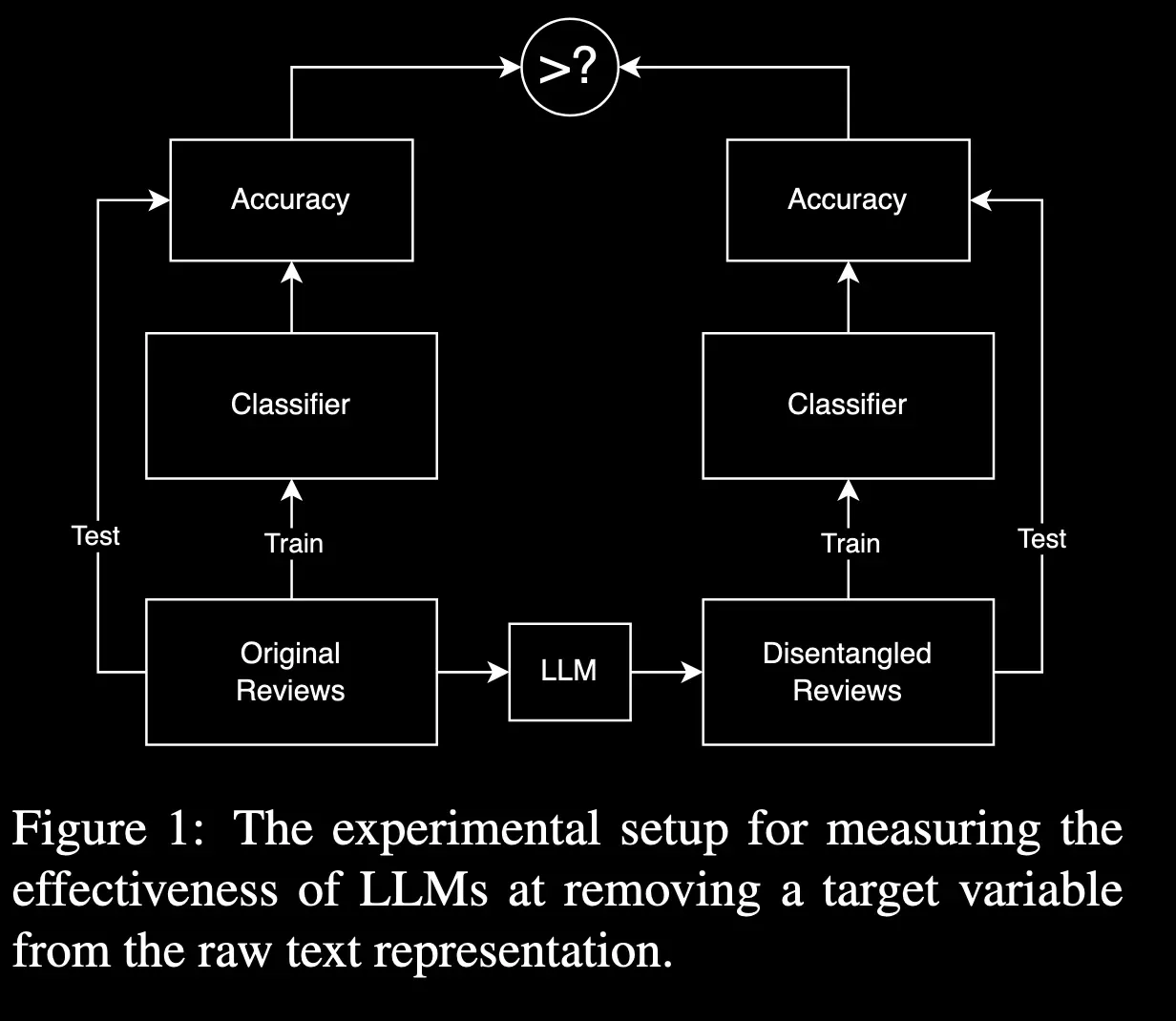

A question that sounds simple, but sits at the core of using LLMs for social science measurement: can a model reliably separate the latent dimensions we care about — without leaking confounds or inventing structure?

Text-as-data

Evaluation

LLMs

Measurement

Summary

Evaluating LLM measurement reliability

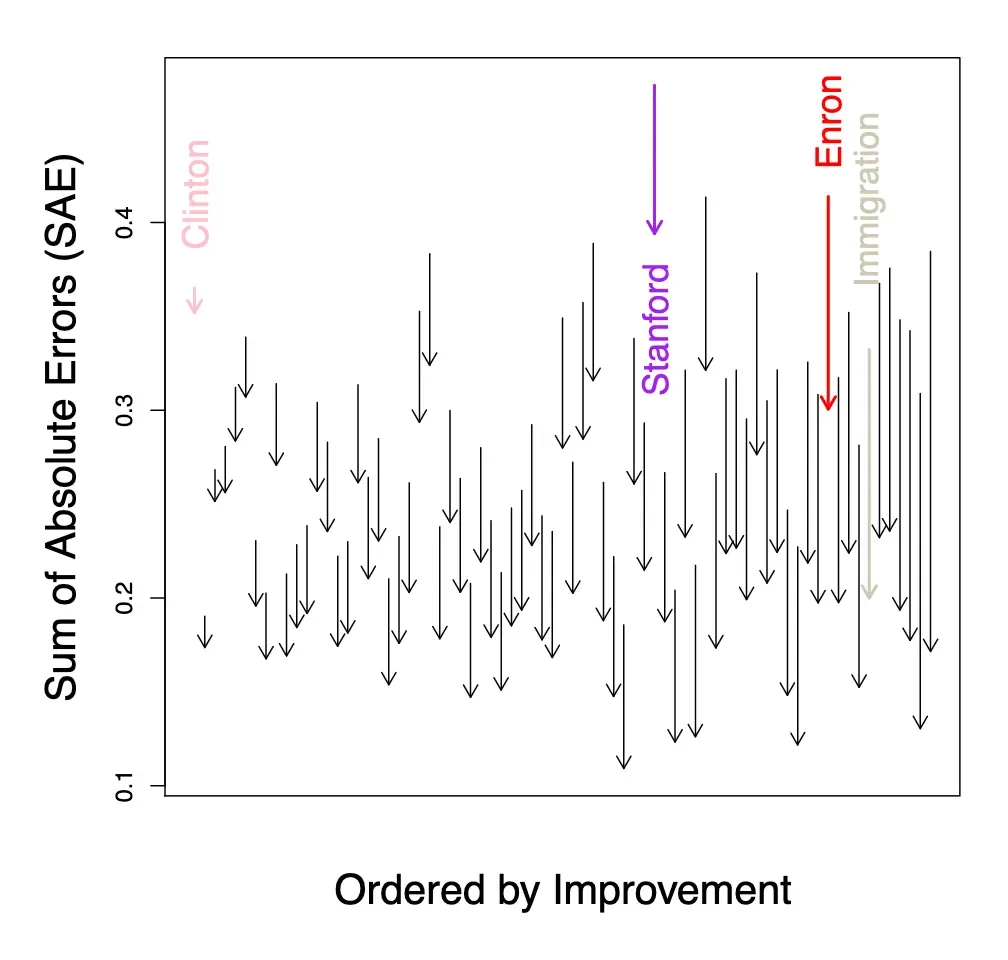

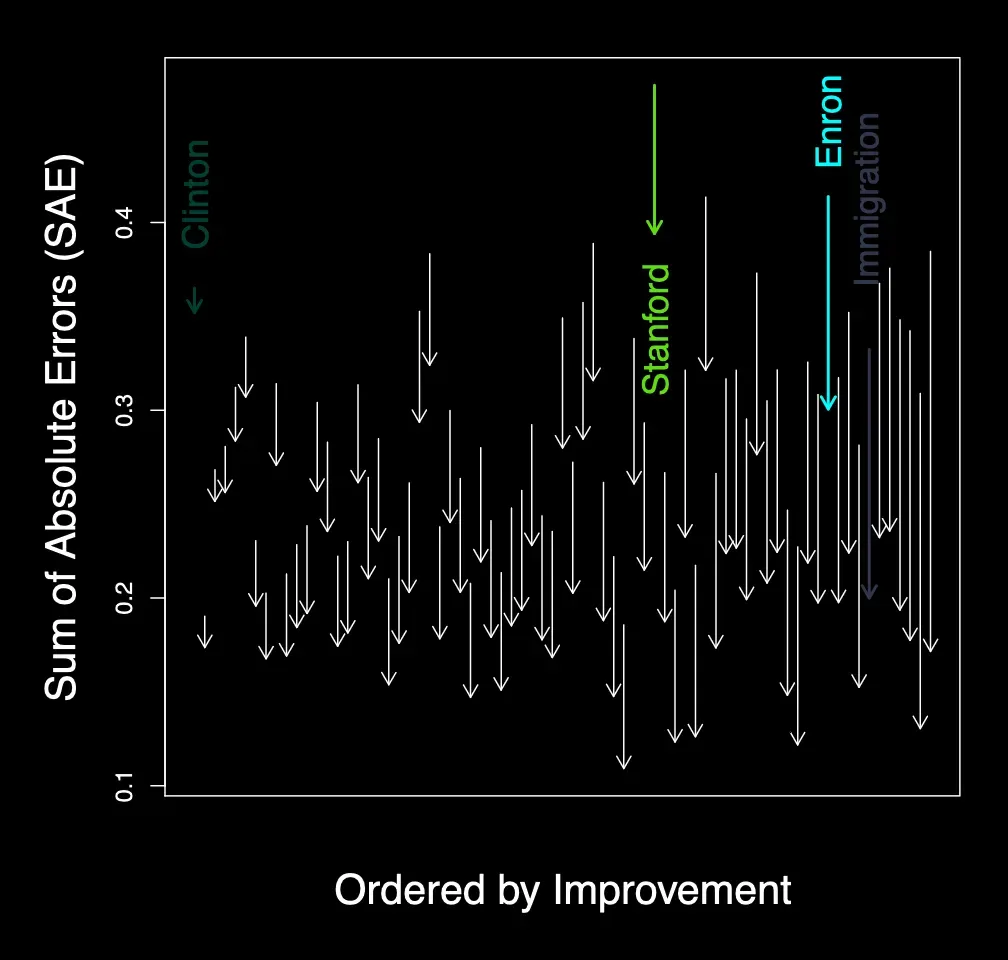

Models can produce fluent outputs — but measurement needs stability, calibration, and clear failure modes. We test reliability directly with careful tasks, baselines, and comparisons to human judgments.

Disentanglement

Leakage

Human comparison

Figures